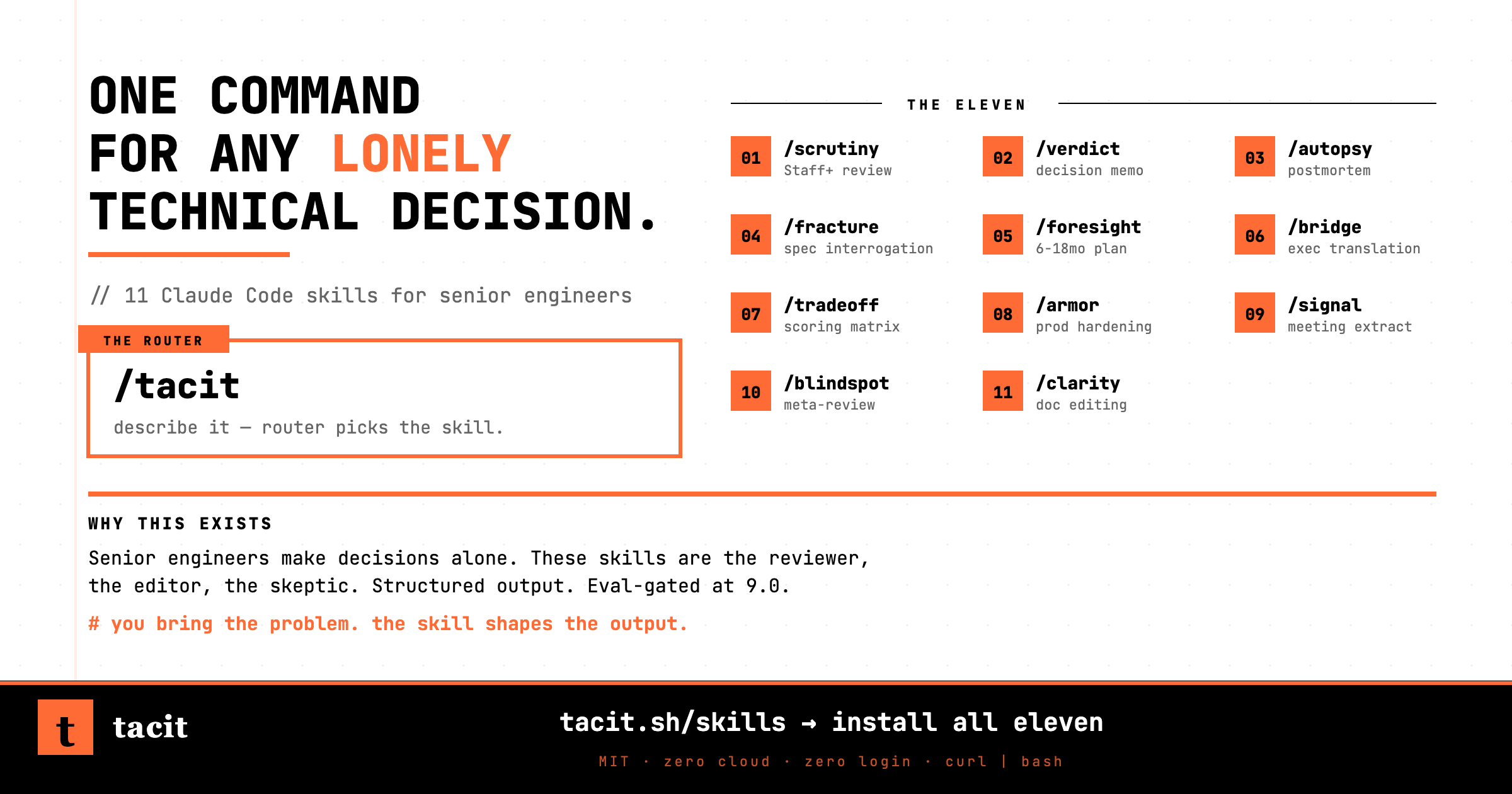

Tacit Skills — one command for any lonely technical decision

Design reviews before the review meeting. Postmortems nobody has time to run. Decision memos that don't warrant an offsite but do warrant a shared doc. Described in plain English. Structured as artifacts you can forward.

curl -fsSL https://tacit.sh/install.sh | bash ~/.claude/commands/. Re-run the same command to update. Audit the script first: tacit.sh/install.sh.

v0.2 — what's in this release.

Eleven skills. The full collection. Two independent reviewers graded every skill. The router had to clear a higher bar because every request goes through it. Every new skill passed on first review.

Every skill scored 8.5 or higher on average, with no single dimension below 8.0. The router was held to a stricter ≥9.0. Methodology is detailed in Evaluation methodology below.

The generic chatbot produces generic chatbot output.

Senior engineers make a specific class of decision alone.

Design review the week before the design review meeting. Postmortem at 11pm because the all-hands is tomorrow. Decision memo for a build-vs-buy call that doesn't warrant an offsite but does warrant a shared doc that survives the meeting.

These decisions are too small for a full consultation and too consequential to guess at. The default alternative is a blank chat window and a generic helpful assistant. That produces output that reads like "have you considered the tradeoffs?" — which is not what the situation needs.

A skill in this collection is what happens when you take the specific expert you'd want in the room, encode their voice and questioning discipline and structured output format, and give them one command each.

Not pair-programming tools. Not chatbot wrappers with a clever preamble. Not generic productivity skills dressed up in engineer voice. Eleven distinct personas, each calibrated to one decision type.

What makes each skill unique.

Every skill shares the same underlying discipline (see Shared discipline). Eleven characters, not one chatbot eleven times.

Architecture review by a paranoid Staff+ engineer. Walks through your system the way a principal walks through a post-incident Zoom.

Output A Failure Modes table with Trigger / Impact / Current Mitigation. A Scaling Assessment with numbers, not adjectives. A Fix These First list specific enough to turn into tickets.

Turns messy thinking into a decision memo that survives the meeting. State the decision as a question. Steelman every option. Name the stakeholder who has to agree.

Output A memo. TL;DR. Options with Reversibility ratings. Constraints Applied showing which options get eliminated by which constraint. Stakeholder Concerns matrix. A Recommendation that cites at least one constraint or stakeholder by name.

Reconstructs an incident or near-miss into a postmortem that teaches the next on-call something. Timeline must have signals. Root cause must be a mechanism.

Output A full postmortem. What Happened in past-perfect. Timeline with timestamps + signals. Root Cause that names the code path, the cache, the specific assumption. Action Items with owners, dates, and what each one prevents.

Closes your spec. Asks you to explain it. Where you hesitate, that's a fracture. Surfaces hidden dependencies, contradictions, scope creep dressed as MVP.

Output A Fracture Report. Verdict SOUND / FRACTURED / UNSOUND. Fractures typed by category. What's Sound so the report isn't pure demolition. Testability Gaps with Signal-of-Success and Signal-of-Quiet-Failure per requirement.

Plan your system's next 6-18 months like someone who has watched three platform migrations and seen two fail. Names the forces, sequences the bets, fills the sacrifice column.

Output A Strategic Assessment. Forces Map table. Recommended Sequence with mandatory sacrifice column. What Breaks If You Sequence Wrong. Constraints Respected. Deferred Bets. What I Can't See.

Translates technical findings into a brief that lands with the board, the exec, or the customer — without dumbing down. The audience gets exactly what they need to act.

Output An Executive Brief. What Happened (business-first). Why It Matters (impact table). What We Need From You (specific asks with deadlines and consequences). Questions You'll Get Asked.

Side-by-side comparison engine with explicit criteria weighting. Build vs buy, vendor selection, architecture choice — scored, not vibes. No recommendation. Comparison only.

Output A Weighted Scoring Matrix with 1-5 integer scores per cell and weights derived from your forced ranking. Sensitivity Check naming the exact re-weighting that flips the winner. Hidden Costs. Gaps.

Production hardening plan where every recommendation comes with a detection mechanism. If you can't detect it, you can't fix it. Security, observability, reliability, deployment safety.

Output Hardening Items table with mandatory Detection Mechanism column. Detection Coverage Map. Deployment Safety. Incident Readiness. Harden These First (change + detection + effort + consequence).

Extracts every decision, owner, deadline, and unresolved thread from your meeting before the room's memory drifts. Owners are names, not teams. Fake consensus gets called out.

Output Decisions Made table (Decision/Owner/Deadline/Verification). Action Items linked to parent decisions. Unresolved section for things that felt decided but weren't. Meeting Effectiveness score.

Meta-review that finds what's absent, not what's wrong. Reviews any artifact — plan, design, decision, strategy — for the perspectives nobody in the room represents.

Output Typed Blindspots table (STAKEHOLDER / TECHNICAL / TIMELINE / SECOND-ORDER / INCENTIVE). Coverage Map showing what's covered well AND what's missing. Unstated Assumptions. Fix These First.

Document clarity review by a technical editor who has reviewed 500 RFCs. Finds where readers give up, where jargon excludes, where structure buries the decision.

Output Structural Diagnosis. Jargon Audit table (term/location/problem/replacement). Rewrite Samples with verbatim before/after. Reading-Level Assessment (current/target/gap). What's Working.

The router. One command. Describe what you're working on in plain English. Get a short plan that runs the right skills in the right order — or an honest decline with a real choice.

Asks at most two questions. Proposes a plan with the exact skills it will run, the reason for each, the inputs each needs, the dependencies between them. Waits for yes before running anything. Respects sequencing rules automatically. Decomposes oversized requests instead of chaining 5+ skills.

Two decline cases

"No Tacit skill fits cold-email sequences. Closest external: /marketing-skills:cold-email."

"No Tacit skill fits. I don't know an external skill that does either." No apology. No invented skill. No force-fitting.

How quality is measured.

Every skill is graded by two independent reviewers working from a five-part checklist before it ships — one optimizes for specificity and shareability, the other for actionability and clarity.

The bar is concrete. Content skills pass at ≥8.5 average with no single dimension below 8.0. The router is held to ≥9.0 because it gates every interaction with the collection. A skill that doesn't clear the bar gets revised against specific feedback and re-reviewed.

The checklist covers: whether the skill asks the right questions, whether its findings reference specific things you said, whether its voice stays consistent, whether its output follows the promised structure, and whether the result is actually worth forwarding to a colleague.

If three rounds don't land it, the design is wrong — not the prompt.

Every skill follows the same build sequence.

Specify the persona and the decision type. Design test fixtures that exercise different branches. Draft the SKILL.md. Simulate real sessions. Review against the rubric. Revise until the bar clears. Ship.

What makes this work across the collection is discipline carried forward. Lessons from earlier skills become constraints in the baseline for later ones — voice rules, banned hedges, interrogation depth, verdict structure. The same failure doesn't ship twice.

Install. Run. Forward the output.

Each skill is a single markdown file. Drop it into ~/.claude/commands/ and the command is live. No subscription. No cloud. No login. Works in Claude Code out of the box.

all eleven (recommended)

curl -fsSL https://tacit.sh/install.sh | bashspecific skills only

curl -fsSL https://tacit.sh/install.sh | bash -s tacit

curl -fsSL https://tacit.sh/install.sh | bash -s scrutiny verdict foresightverify it worked

ls ~/.claude/commands/tacit.md && echo "installed"Prints installed if the router landed. If it's missing, re-run the installer — silent download failures are possible behind restrictive proxies.

update later

curl -fsSL https://tacit.sh/install.sh | bashSame command — the installer overwrites existing files.

audit before running (recommended for any curl | bash)

curl -fsSL https://tacit.sh/install.sh | less

curl -fsSL https://tacit.sh/install.sh -o install.sh && bash install.shmanual — clone and copy

git clone https://github.com/ketankhairnar/tacit-skills

cp tacit-skills/skills/*.md ~/.claude/commands/use it in Claude Code

/tacit had a 34-min outage last night, need the postmortem before all-hands/autopsy (timeline + root cause) → /bridge (brief for all-hands)

/tacit deciding between Mixpanel, Amplitude, and building on ClickHouse/tradeoff (scoring matrix) → /verdict (decision memo)

/tacitThe skills are portable.

The skills are pure prompts — no tool-use, no MCP calls, no Claude Code-specific APIs. Only the install path and slash-command invocation are Claude Code-specific. The SKILL.md content works as a system prompt in any LLM agent that takes one.

OpenAI Codex CLI

Drops in ~/.codex/prompts/. Strip the YAML frontmatter:

mkdir -p ~/.codex/prompts

for skill in scrutiny verdict autopsy fracture foresight bridge tradeoff armor signal blindspot clarity tacit; do

curl -fsSL "https://raw.githubusercontent.com/ketankhairnar/tacit-skills/main/skills/${skill}.md" \

| sed '1,/^---$/d' | sed '1,/^---$/d' \

> ~/.codex/prompts/${skill}.md

doneOpenCode

Drops in ~/.config/opencode/command/ (global) or .opencode/command/ (per-project):

mkdir -p ~/.config/opencode/command

for skill in scrutiny verdict autopsy fracture foresight bridge tradeoff armor signal blindspot clarity tacit; do

curl -fsSL "https://raw.githubusercontent.com/ketankhairnar/tacit-skills/main/skills/${skill}.md" \

-o ~/.config/opencode/command/${skill}.md

doneGeneric ChatGPT / Claude / Gemini chat

Paste the contents of any SKILL.md as the system prompt (or first message). The skill follows its protocol from there.

Not for: code review (use your IDE), long pair-programming sessions, anything that needs filesystem or codebase access. These are prompt-only skills — they work on what you tell them, not what's in your repo.

The collection is complete. The platform is not.

All eleven planned skills are shipped. The roadmap from v0.1 is done. What remains is infrastructure, distribution, and whatever real usage breaks.

- → Usage-driven iteration: skills evolve based on real decline patterns and user feedback. If a skill consistently misses a class of input, it gets a revision.

- → Multi-target installer: if there's signal that engineers want first-class Codex / OpenCode install, the installer grows a

--targetflag. - → R2-hosted skills: if GitHub raw rate limits or latency become an issue, the installer flips to a Cloudflare R2 bucket. End-user one-liner stays the same.

- → Versioned skill paths: for users who pin a specific skill version (e.g., if a skill update regresses a behavior they relied on).

- → New skill candidates: if a pattern of decline requests reveals a 12th skill the collection should have had, it gets built through the same sequence as the first eleven.

The bar is: a skill either clears review, or it doesn't ship.